Expressvpn Glossary

Recurrent neural network (RNN)

What is a recurrent neural network?

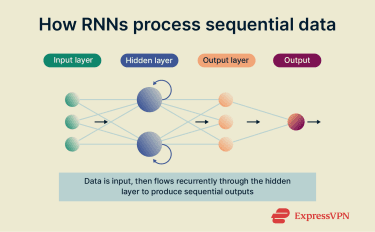

A recurrent neural network (RNN) is a type of artificial neural network designed to process sequential or time-series data. Memory of prior inputs is retained by looping information through its hidden states, allowing the RNN to analyze data where order and context matter.

In practice, RNNs are commonly used for tasks such as machine translation, speech recognition, and sequence prediction.

How does a recurrent neural network work?

RNNs are made up of layers of interconnected nodes, with output from one being fed back into the network. This feedback loop allows the model to store contextual information in its hidden states, which act as short-term memory.

By processing sequences element by element, such as words in a sentence or frames in an audio file, the RNN can learn time-dependent patterns.

Why are recurrent neural networks important?

RNNs have proven quite useful in a wide range of applications, including:

- Predicting time-series patterns: Modeling trends in stock markets, weather systems, and more.

- Detecting anomalies in cybersecurity: Identifying malicious sequences in network traffic or system logs.

- Enabling early conversational AI: Powering sequence-to-sequence models in chatbots and machine translation (pre-Transformer era).

- Analyzing real-time signals: Supporting low-latency applications on edge devices (e.g., wearables, embedded systems).

Types of recurrent neural networks

Different types of RNNs have been developed to address specific data processing challenges:

- Vanilla RNN: Basic kind of RNN that processes sequences step by step and uses feedback loops to retain short-term memory.

- Long short-term memory (LSTM): Designed to manage long-range dependencies and reduce problems such as vanishing gradients during training.

- Gated recurrent unit (GRU): Simplified version of LSTM that uses fewer parameters while maintaining similar performance on many tasks.

- Bidirectional RNN: Processes sequences in both forward and backward directions, improving accuracy when full context is available.

Challenges of recurrent neural networks

As effective as RNNs are for sequence modeling, they have largely been replaced by transformers, a different type of neural network. Unlike transformers, RNNs cannot parallelize input processing, so latency and throughput degrade linearly with context length. Other challenges include:

- Computationally intensive: Training often demands significant computing power and runs more slowly than simpler models.

- Difficulty with long sequences: May struggle with very long inputs due to vanishing gradients and memory limits, though LSTM and GRU architectures can mitigate this.

- High data requirements: Large, well-labeled datasets are typically needed for effective learning.

- Privacy and security concerns: Models exposed to unencrypted or non-anonymized data may pose privacy risks.

- Vanishing and exploding gradients: Gradients can shrink to near-zero (vanishing) or grow exponentially (exploding) during backpropagation, causing unstable training and numerical overflow.

RNNs vs. other neural networks

While RNNs specialize in sequential or time-dependent data, other neural network types are optimized for different tasks.

Convolutional neural networks (CNNs) excel at recognizing spatial patterns in images, and transformer models use attention mechanisms to process entire sequences in parallel. Although these models can be trained faster and capture longer-range dependencies, RNNs remain valuable for applications that require contextual, time-based understanding.

Recursive neural networks are closely related to RNNs. The fundamental difference is that whereas RNNs operate on sequences, recursive neural networks apply the same weights recursively over hierarchical data structures like parse trees.

Further reading

- Not so (artificially) intelligent: 8 times machine learning got it wrong

- How deepfakes are changing what we remember

- Machines are learning, and they know a lot about you